You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

"The stock photography industry is going away"

- Thread starter JohnG

- Start date

Kerryman

Member

I've been a professional photographer for over 40 years. It's how I pay my rent and buy food. I've been contributing to one particular stock library since 2004. They sell an image of mine almost every day. Three, this morning, as it happens.

The photography 'market' has always been over-saturated with sellers - and since digital cameras came along - "was there ever any other kind" some may ask...yes, and I have used them, and still do - it's been almost impossible to make a living solely through stock images.

But, you can still sell there (see above) and if you're careful about what you sell, AI can't compete. Those 3 sales this morning were all of...wait for it...famous musicians!

Of course, over-saturation is an issue with the music composition market, too, and also somewhere that AI is making inroads.

Despite that, I recently signed my first contract to produce an album of 10 cues for TV documentaries with a library. So, never say die. Just say...I can do it despite AI.

The photography 'market' has always been over-saturated with sellers - and since digital cameras came along - "was there ever any other kind" some may ask...yes, and I have used them, and still do - it's been almost impossible to make a living solely through stock images.

But, you can still sell there (see above) and if you're careful about what you sell, AI can't compete. Those 3 sales this morning were all of...wait for it...famous musicians!

Of course, over-saturation is an issue with the music composition market, too, and also somewhere that AI is making inroads.

Despite that, I recently signed my first contract to produce an album of 10 cues for TV documentaries with a library. So, never say die. Just say...I can do it despite AI.

Alchemedia

Decomposer

Urgent Action Needed to Stop AI Developers From ‘Destroying’ Artists’ Careers, Says U.K. Parliament Committee

A U.K. parliamentary group calls for the passage of strong AI laws to protect artists from the nascent technology.

richiebee

Active Member

I've been working as a professional photographer for about 7 years (after 17 years in music!). I'm lucky to be salaried and I work in a marketing and communications department, although I am basically autonomous. When I took the job, I didn't think it would last me until retirement (I kind of still don't), but I didn't expect the biggest threat to come from within my own department. This isn't a knock at Kerryman or that particular industry, but I can't tell you how many times they've struggled with trying to shoehorn a piece of stock photography into a project instead of having me go take a photo. It's kind of bizarre. I've seen the papertrail of a project go on for six months trying this and that, back and forth with their unhappy clients, before they finally ask me to go take some photos, and the project is finished within a week. Not only that, but they've used actual people and places that are relevant to our environment and because I get to understand exactly what they want to portray, they're get exactly what they want. Everyone wins. Their clients are much happier with us using familiar places, and people.

And yet, they don't learn from it. Their own laziness costs time and money. I've given up trying to get them to change, and instead build my own relationships with other clients to ensure a relatively busy schedule. The majority of my work comes from news and events, not the creative team, so it doesn't matter to me really what they do, but it sure is frustrating to see them cutting off their own noses.

Laziness from within will be the downfall of the creative industry, not AI itself which can actually be used in much more helpful ways.

And yet, they don't learn from it. Their own laziness costs time and money. I've given up trying to get them to change, and instead build my own relationships with other clients to ensure a relatively busy schedule. The majority of my work comes from news and events, not the creative team, so it doesn't matter to me really what they do, but it sure is frustrating to see them cutting off their own noses.

Laziness from within will be the downfall of the creative industry, not AI itself which can actually be used in much more helpful ways.

Kerryman

Member

Stock photography is only a part of how a make a living, and not the biggest part.This isn't a knock at Kerryman or that particular industry, but I can't tell you how many times they've struggled with trying to shoehorn a piece of stock photography into a project instead of having me go take a photo.

As a freelance photographer, I have had to build up a long client list, from whom I get regular commissions. This includes newspapers, magazines, book publishers and large (some international) organizations. I've never done weddings and limit my portraiture work to environmental types, for the previously mentioned clients.

Not only has stock photography impinged negatively on my commissioned work over recent years, it has also provided less of an income for me, too. Clients will buy a stock image (usually not mine) rather than book me for half a day.

When my stock images sell, they earn a fraction of what they used to do, because libraries will do 'blanket fees', which allows a client to buy a set number of images a month for a fixed fee. If my image is used by that client, I might get $20 where I would have got $150 or more, before the blanket deal.

So yes, I feel your pain @richiebee but trust me I hurt just as badly, for slightly different reasons (and I have done for a lot more than 7 years). That said, I'd rather be freelance than salaried - because putting all your eggs in one basket can really limit your opportunities in this business. Don't rely on one client, because if they go bust, so do you.

Incidentally, I've just seen a post in a composer's forum about blanket deals in music libraries. Apparently, some composers with hundreds of placements for cues are seeing only a few dollars return, rather than hundreds, because the library is splitting their upfront blanket fee among all their writers.

richiebee

Active Member

I suspect our take on photography and why we get up in the morning is very different. I found out a very long time ago that self-employment is not for me.That said, I'd rather be freelance than salaried - because putting all your eggs in one basket can really limit your opportunities in this business. Don't rely on one client, because if they go bust, so do you.

Kerryman

Member

I suspect it's more our take on employment that is different.I suspect our take on photography and why we get up in the morning is very different. I found out a very long time ago that self-employment is not for me.

See this article, and yours:

www.creativebloq.com

www.creativebloq.com

These two articles are a great example of why the courts will never rule that AI companies must only use copyright cleared content in training and stop the great replacement.

In the article, their definition of ethical is not about asking creators for permission, or even paying them. Shutterstock and Getty, mentioned in these articles, made their own AI model of their content, made by the same people that think these companies might win the legal battle for them. But they're the ones that you'll have to fight as well if you want to stop all this copyrighted material to be used for training

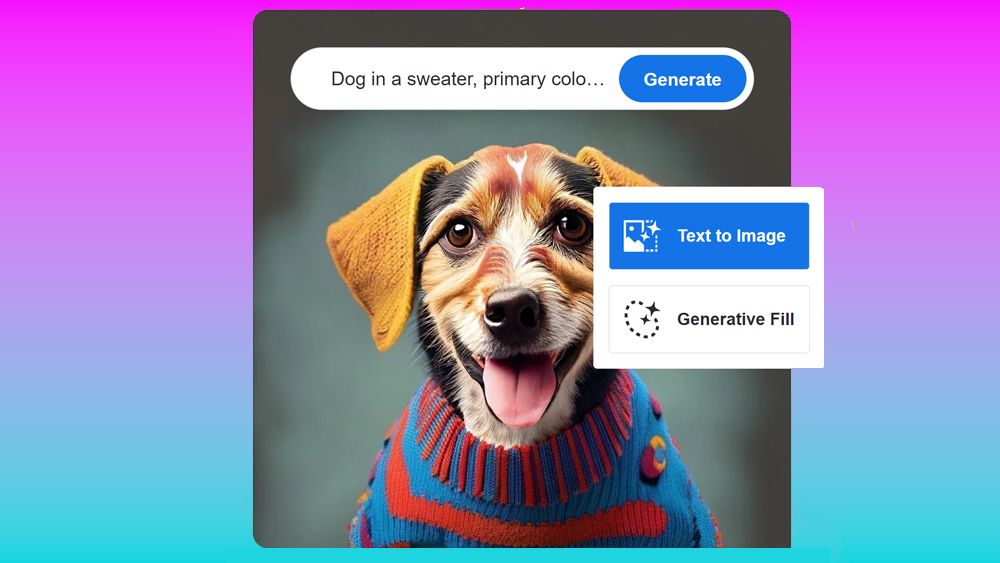

Look at what Adobe are quoted as saying in the above article:

So when companies like Midjourney and OpenAI use massive datasets of imagery scraping the internet for everything they can get their hands on, it's "theft" and "unethical". But when Shutterstock, Adobe, and Getty Images do it to their own content creators, that's fine?

But don't worry, if Adobe used your images to train their AI, they'll throw a few dollars at you and call it a "bonus". That will make up for losing your entire industry. We know it can't possibly be more than a few dollars because otherwise they'd have bankrupted themselves.

It's sad that artists (and in-denial composer producers) are thinking they'll be fought for in court, when really these companies have zero interest in fighting for them and are currently (or actively) already betraying them. They care about THEMSELVES being replaced by AI, not you! They cared that someoneELSE "stole" your work for training their AI. Like Adobe, Universal Music will do the same thing. They'll talk about how much this harms artists on the one hand then casually train their own AI on all your work and say they're the ethical ones and expect you to be grateful. Here have $20.

It should be even more insulting because unlike the AI companies, these company's are the ones claiming it's theft and bad for artists, yet they'll go ahead and do the same thing anyway to their creators and act like it's effectively different.

Lets say you have music published with Universal Music. How much would you need if Universal Music made an AI like Udio (only better because it's the future), trained on every track in their collection, and suddenly you find you've been paid a hundred bucks or something for all your work to be used in the training?

The best outcome for these companies is they get those like OpenAI to license their content to them. That's why they're so casual about making their own models, they know the court's legal case isn't going to actually make THEIR models unlawful.

The faster creators give up on this fantasy that they can stop this the better off they'll be. If you worked really hard and were really successful, you might be able to marginally slow down a company or two for maybe 6 months. By the time these cases even reach a legal precedent open source will have already made it impossible to go back, even if you wanted to.

So Adobe Firefly AI isn't as squeaky clean as it seemed

Should we really be surprised?

These two articles are a great example of why the courts will never rule that AI companies must only use copyright cleared content in training and stop the great replacement.

In the article, their definition of ethical is not about asking creators for permission, or even paying them. Shutterstock and Getty, mentioned in these articles, made their own AI model of their content, made by the same people that think these companies might win the legal battle for them. But they're the ones that you'll have to fight as well if you want to stop all this copyrighted material to be used for training

Look at what Adobe are quoted as saying in the above article:

Hayward also noted that Adobe Stock contributors who submitted AI-generated imagery would qualify for Adobe's 'Firefly bonus', which it paid to contributors whose content was used to train the first public version of the AI model.

So when companies like Midjourney and OpenAI use massive datasets of imagery scraping the internet for everything they can get their hands on, it's "theft" and "unethical". But when Shutterstock, Adobe, and Getty Images do it to their own content creators, that's fine?

But don't worry, if Adobe used your images to train their AI, they'll throw a few dollars at you and call it a "bonus". That will make up for losing your entire industry. We know it can't possibly be more than a few dollars because otherwise they'd have bankrupted themselves.

It's sad that artists (and in-denial composer producers) are thinking they'll be fought for in court, when really these companies have zero interest in fighting for them and are currently (or actively) already betraying them. They care about THEMSELVES being replaced by AI, not you! They cared that someoneELSE "stole" your work for training their AI. Like Adobe, Universal Music will do the same thing. They'll talk about how much this harms artists on the one hand then casually train their own AI on all your work and say they're the ethical ones and expect you to be grateful. Here have $20.

It should be even more insulting because unlike the AI companies, these company's are the ones claiming it's theft and bad for artists, yet they'll go ahead and do the same thing anyway to their creators and act like it's effectively different.

Lets say you have music published with Universal Music. How much would you need if Universal Music made an AI like Udio (only better because it's the future), trained on every track in their collection, and suddenly you find you've been paid a hundred bucks or something for all your work to be used in the training?

The best outcome for these companies is they get those like OpenAI to license their content to them. That's why they're so casual about making their own models, they know the court's legal case isn't going to actually make THEIR models unlawful.

The faster creators give up on this fantasy that they can stop this the better off they'll be. If you worked really hard and were really successful, you might be able to marginally slow down a company or two for maybe 6 months. By the time these cases even reach a legal precedent open source will have already made it impossible to go back, even if you wanted to.

Last edited:

Wunderhorn

Senior Member

As long as we as a society keep looking up to the principle of (largely) unregulated capitalism as the holy grail the winner will be clear: Whatever is cheaper and faster and more lucrative will be allowed to trample on ethics, fairness and human dignity. We have made our bed and now we have to lay in it.

AI is taking over so many sectors, so quickly, we don't even understand all the implications. When we wake up it might be too late.

Yes, a fascinating wonder machine, this technology. But are sufficiently fit to tame the beast?

AI is taking over so many sectors, so quickly, we don't even understand all the implications. When we wake up it might be too late.

Yes, a fascinating wonder machine, this technology. But are sufficiently fit to tame the beast?

aaronventure

Senior Member

There's gonna be a market for stock photos of musical instruments for a bit longer... try getting an AI to generate a legit image of a French horn.

The fingers are mostly there but it just can't get the tubing right

The fingers are mostly there but it just can't get the tubing right

Pier

Senior Member

It's not fine for a content platform to do these things but people who uploaded content signed a contract. They gave Adobe etc permission to use their content. It's very different from AI companies getting content from everywhere to train their models without permission and profiting from that.But when Shutterstock, Adobe, and Getty Images do it to their own content creators, that's fine?

And then there are content platforms like Youtube, SoundCloud, Instagram etc who afaik still haven't disclosed if and how they are using user content to train AI models.

It took less than a year for Ai to fix the eyes, and get fingers generally accurate, after it got good enough that people started paying attention and laughing about it. Well they're not laughing nearly as much anymore.There's gonna be a market for stock photos of musical instruments for a bit longer... try getting an AI to generate a legit image of a French horn.

The fingers are mostly there but it just can't get the tubing right

"a bit longer" may really be a "bit".

You can't think in terms of safety when we're on this sort of timescale. Is it really safe if it's another 1 year? 2 years? Even 5 years is a LONG time for Ai development, not very long for us.

aaronventure

Senior Member

Yeah, well, I guess we'll see. But it's always a service issue. Scrolling through stock photos is a lot faster than waiting for AI to generate images for you. And initial outputs are not always production ready, especially if you want anything bigger than 512x512.It took less than a year for Ai to fix the eyes, and get fingers generally accurate, after it got good enough that people started paying attention and laughing about it. Well they're not laughing nearly as much anymore.

"a bit longer" may really be a "bit".

You can't think in terms of safety when we're on this sort of timescale. Is it really safe if it's another 1 year? 2 years? Even 5 years is a LONG time for Ai development, not very long for us.

Stock photographers might just have to change jobs to stock AI artists.

But the large 24MP+ shots aren't going anywhere for now. AI image generation is the most expensive AI workload by far. Right now I think 24MP+ generation would require stupid amounts of VRAM, and I can't quite image the model size and training time + cost.

Although I don't know how big the market is for such high resolution stock shots.

We'll see, sure. The whole thing is developing at rather breakneck speeds.

Share: